Configuration ¶

Ingress ¶

In order to make the Atlassian product available from outside of the Kubernetes cluster, you must provision a suitable HTTP/HTTPS traffic entry controller.

The Helm charts support exposing products using either:

- Kubernetes Ingress resources (via an Ingress controller), or

- Kubernetes Gateway API resources (via a Gateway API controller), by creating a

HTTPRoute.

The exact details will be highly site-specific. These Helm charts were tested using the NGINX Ingress Controller. We also provide example instructions on how controllers can be installed and configured.

The charts themselves provide templates for either Ingress or HTTPRoute resources (depending on configuration). These include the required knobs for configuring hostnames, paths, timeouts, and (for NGINX Ingress) annotations.

Some key considerations to note when configuring the controller are:

Ingress

- At a minimum, the ingress needs the ability to support long request timeouts, as well as session affinity (aka "sticky sessions").

-

The

Ingresstemplate provided as part of the Helm charts is geared toward the NGINX Ingress Controller and can be configured via theingressstanza in the appropriatevalues.yaml. Some key aspects that can be configured include:- Usage of the NGINX Ingress Controller

- Ingress Controller annotations

- The request max body size

- The hostname of the ingress resource

-

When installed, with the provided configuration, the NGINX Ingress Controller will provision an internet-facing (see diagram below) load balancer on your behalf. The load balancer should either support the Proxy Protocol or allow for the forwarding of

X-Forwarded-*headers. This ensures any backend redirects are done so over the correct protocol. - If the

X-Forwarded-*headers are being used, then enable the use-forwarded-headers option on the controllersConfigMap. This ensures that these headers are appropriately passed on. - The diagram below provides a high-level overview of how external requests are routed via an internet-facing load balancer to the correct service via Ingress.

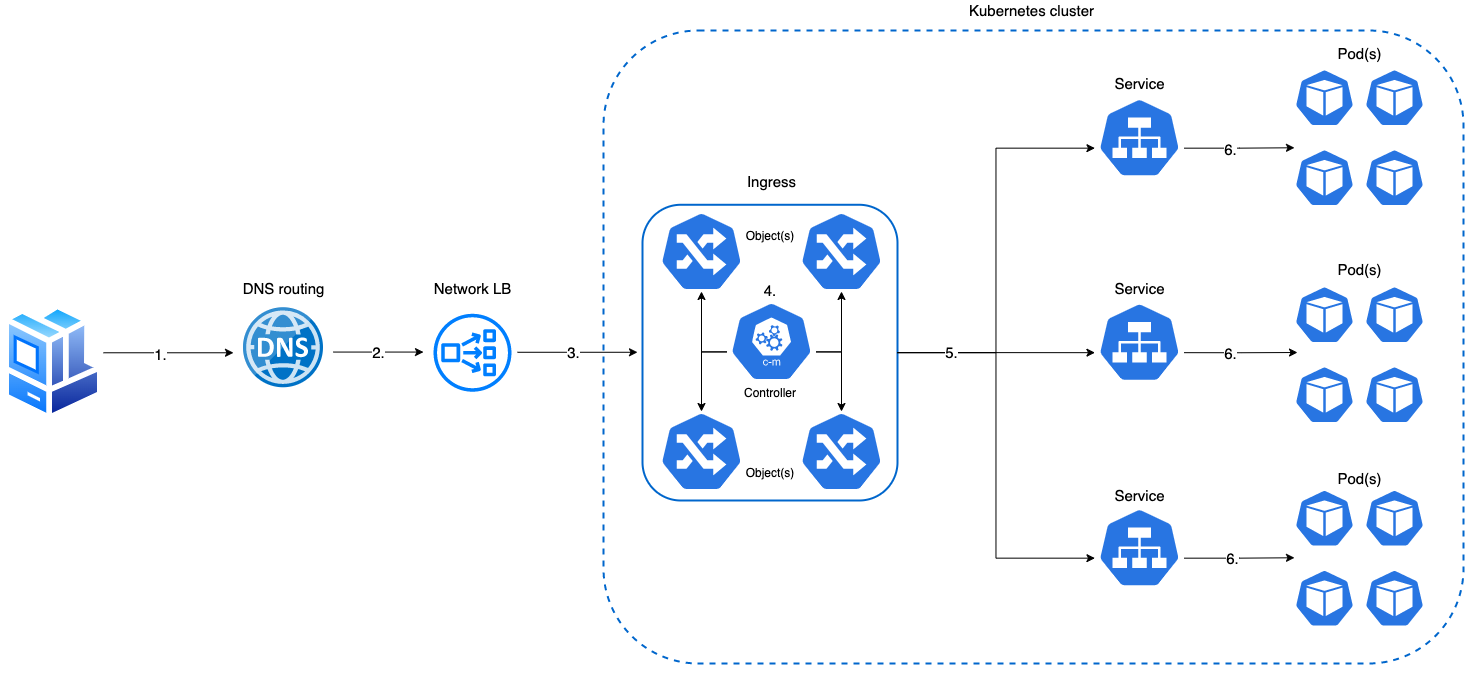

Traffic flow (diagram)

- Inbound client request

- DNS routes request to appropriate LB

- LB forwards request to internal Ingress

- Ingress controller performs traffic routing lookup via Ingress object(s)

- Ingress forwards request to appropriate service based on Ingress object routing rule

- Service forwards request to appropriate pod

- Pod handles request

Request body size

By default the maximum allowed size for the request body is set to 250MB. If the size in a request exceeds the maximum size of the client request body, an 413 error will be returned to the client. The maximum request body can be configured by changing the value of maxBodySize in values.yaml.

Session Stickiness for High Availability ¶

NGINX Ingress Controller ¶

Session stickiness is automatically enabled when using NGINX Ingress Controller:

ingress:

create: true

nginx: true # Automatically enables session stickiness

host: bitbucket.example.com

Custom Configuration ¶

ingress:

create: true

nginx: true

host: bitbucket.example.com

annotations:

"nginx.ingress.kubernetes.io/session-cookie-name": "BITBUCKET-SESSION"

"nginx.ingress.kubernetes.io/session-cookie-max-age": "28800" # 8 hours

"nginx.ingress.kubernetes.io/session-cookie-change-on-failure": "true"

Verify Configuration ¶

# Check ingress annotations

kubectl describe ingress bitbucket -n atlassian

# Test with curl (look for Set-Cookie header)

curl -I https://bitbucket.example.com/status

Common Issues ¶

| Issue | Solution |

|---|---|

| Users getting logged out randomly | Verify session stickiness annotations are applied |

| Requests routing to different pods | Check if clustering is enabled |

| Session cookies not working | Ensure ingress controller supports session affinity |

Gateway API (HTTPRoute) ¶

The charts can create a Gateway API HTTPRoute instead of an Ingress resource.

ingress:

create: false

gateway:

create: true

hostnames:

- bitbucket.example.com

https: true

parentRefs:

- name: atlassian-gateway

namespace: gateway-system # optional

The gateway stanza supports additional options for fine-tuning the HTTPRoute:

parentRefs– list of ParentReference objects pointing to yourGatewayresource (supportsname,namespace,sectionName, etc.)externalPort– non-standard port that users connect on (defaults to443/80)timeouts–requestandbackendRequesttimeouts (replaces IngressproxyReadTimeout/proxySendTimeout)filters– HTTPRouteFilter list for header modification, redirects, or URL rewritesadditionalRules– extra HTTPRouteRule entries for advanced routing (traffic splitting, header-based routing)annotations/labels– metadata applied to the HTTPRoute resource

Gateway mode without creating an HTTPRoute

Setting gateway.hostnames activates gateway mode for the product's proxy and base-URL configuration even when gateway.create is false. This allows use with a pre-existing Gateway or external proxy/load balancer.

For the full list of values and usage examples, see the Gateway API guide.

Sticky sessions with Gateway API

Session affinity is not part of the standard Gateway API HTTPRoute spec. You must configure it using your Gateway implementation (for example, Envoy Gateway policy resources or Istio traffic policy). See Session affinity with Gateway API for working examples and fallbacks.

Other Ingress Controllers ¶

For other ingress controllers (AWS ALB, Google Cloud Load Balancer, Azure Application Gateway), refer to your controller's documentation for session stickiness configuration.

LoadBalancer/NodePort Service Type ¶

Feature Availability

Service session affinity configuration is available starting from Helm chart version 1.13

It is possible to make the Atlassian product available from outside of the Kubernetes cluster without using an ingress controller.

NodePort Service Type ¶

When installing Helm release, you can set:

jira:

service:

type: NodePort

sessionAffinity: ClientIP

sessionAffinityConfig:

clientIP:

timeoutSeconds: 10800

The service port will be exposed on a random port from the ephemeral port range (30000-32767) on all worker nodes. It is possible to explicitly set NodePort in service.nodePort (make sure it's not reserved for any existing service in the cluster). You can provision a LoadBalancer with 443 or 80 (or both) listeners that will forward traffic to the node port (you can get service node port by running kubectl describe $service -n $namespace). Both LoadBalancer and Kubernetes service should be configured to maintain session affinity. LoadBalancer session affinity should be configured as per instructions for your Kubernetes/cloud provider. Service session affinity is configured by overriding the default Helm chart values (see the above example). Make sure you configure networking rules to allow the LoadBalancer to communicate with the Kubernetes cluster worker node on the node port.

Tip

For more information about Kubernetes service session affinity, see Kubernetes documentation.

LoadBalancer Service Type ¶

LoadBalancer service type is the automated way to expose the service on the node port, create a LoadBalancer for it and configure networking rules allowing communication between the LoadBalancer and the Kubernetes cluster worker nodes:

jira:

service:

type: LoadBalancer

sessionAffinity: ClientIP

sessionAffinityConfig:

clientIP:

timeoutSeconds: 10800

AWS EKS users

If you install the Helm chart for the first time, you will need to skip overriding sessionAffinity in your values.yaml, otherwise the LoadBalancer will not be created and you will see the following error:

Error syncing load balancer: failed to ensure load balancer: unsupported load balancer affinity: ClientIP

sessionAffinity, run helm upgrade with $productName.service.sessionAffinity set to ClientIP. Volumes ¶

The Data Center products make use of filesystem storage. Each DC node has its own local-home volume, and all nodes in the DC cluster share a single shared-home volume.

By default, the Helm charts will configure all of these volumes as ephemeral emptyDir volumes. This makes it possible to install the charts without configuring any volume management, but comes with two big caveats:

- Any data stored in the

local-homeorshared-homewill be lost every time a pod starts. - Whilst the data that is stored in

local-homecan generally be regenerated (e.g. from the database), this can be a very expensive process that sometimes requires manual intervention.

For these reasons, the default volume configuration of the Helm charts is suitable only for running a single DC pod for evaluation purposes. Proper volume management needs to be configured in order for the data to survive restarts, and for multi-pod DC clusters to operate correctly.

While you are free to configure your Kubernetes volume management in any way you wish, within the constraints imposed by the products, the recommended setup is to use Kubernetes PersistentVolumes and PersistentVolumeClaims.

The local-home volume requires a PersistentVolume with ReadWriteOnce (RWO) capability, and shared-home requires a PersistentVolume with ReadWriteMany (RWX) capability. Typically, this will be an NFS volume provided as part of your infrastructure, but some public-cloud Kubernetes engines provide their own RWX volumes (e.g. AWS EFS, Google Filestore, Azure Files). While this entails a higher upfront setup effort, it gives the best flexibility.

Volumes configuration ¶

By default, the charts will configure the local-home and shared-home values as follows:

volumes:

- name: local-home

emptyDir: {}

- name: shared-home

emptyDir: {}

As explained above, this default configuration is suitable only for evaluation or testing purposes. Proper volume management needs to be configured.

Bitbucket default shared-home location

For a single node Bitbucket deployment, if no shared-home volume is defined, then a subpath of local-home will automatically be used for this purpose, namely: <LOCAL_HOME_DIRECTORY>/shared. This behaviour is specific to Bitbucket itself and is not orchestrated via the Helm chart.

In order to enable the persistence of data stored in these volumes, it is necessary to replace these volumes with something else.

The recommended way is to enable the use of PersistentVolume and PersistentVolumeClaim for both volumes, using your install-specific values.yaml file, for example:

volumes:

localHome:

persistentVolumeClaim:

create: true

sharedHome:

persistentVolumeClaim:

create: true

This will result in each pod in the StatefulSet creating a local-home PersistentVolumeClaim of type ReadWriteOnce, and a single PersistentVolumeClaim of type ReadWriteMany being created for the shared-home.

For each PersistentVolumeClaim created by the chart, a suitable PersistentVolume needs to be made available prior to installation. These can be provisioned either statically or dynamically, using an auto-provisioner.

An alternative to PersistentVolumeClaims is to use inline volume definitions, either for local-home or shared-home (or both), for example:

volumes:

localHome:

customVolume:

hostPath:

path: /path/to/my/data

sharedHome:

customVolume:

nfs:

server: mynfsserver

path: /export/path

Generally, any valid Kubernetes volume resource definition can be substituted here. However, as mentioned previously, externalising the volume definitions using PersistentVolumes is the strongly recommended approach.

Volumes examples ¶

- Bitbucket needs a dedicated NFS server providing persistence for a shared home if you are not using Bitbucket Mesh. Prior to installing the Helm chart, a suitable NFS shared storage solution must be provisioned. The exact details of this resource will be highly site-specific, but you can use this example as a guide: Implementation of an NFS Server for Bitbucket. Please also review Bitbucket Supported platforms.

- We have an example detailing how an existing EFS filesystem can be created and consumed using static provisioning: Shared storage - utilizing AWS EFS-backed filesystem.

- You can also refer to an example on how a Kubernetes cluster and helm deployment can be configured to utilize AWS EBS backed volumes: Local storage - utilizing AWS EBS-backed volumes.

Additional volumes ¶

In addition to the local-home and shared-home volumes that are always attached to the product pods, you can attach your own volumes for your own purposes, and mount them into the product container. Use the additional (under volumes) and additionalVolumeMounts values to both attach the volumes and mount them in to the product container.

This might be useful if, for example, you have a custom plugin that requires its own filesystem storage.

Example:

jira:

additionalVolumeMounts:

- volumeName: my-volume

mountPath: /path/to/mount

volumes:

additional:

- name: my-volume

persistentVolumeClaim:

claimName: my-volume-claim

Database connectivity ¶

The products need to be supplied with the information they need to connect to the database service. Configuration for each product is mostly the same, with some small differences.

database.url ¶

All products require the JDBC URL of the database. The format if this URL depends on the JDBC driver being used, but some examples are:

| Vendor | JDBC driver class | Example JDBC URL |

|---|---|---|

| PostgreSQL | org.postgresql.Driver | jdbc:postgresql://<dbhost>:5432/<dbname> |

| MySQL | com.mysql.jdbc.Driver | jdbc:mysql://<dbhost>/<dbname> |

| SQL Server | com.microsoft.sqlserver.jdbc.SQLServerDriver | jdbc:sqlserver://<dbhost>:1433;databaseName=<dbname> |

| Oracle | oracle.jdbc.OracleDriver | jdbc:oracle:thin:@<dbhost>:1521:<SID> |

Database creation

The Atlassian product doesn't automatically create the database,<dbname>, in the JDBC URL, so you need to manually create a user and database for the used database instance. Details on how to create product-specific databases can be found below:

database.driver ¶

Jira and Bitbucket require the JDBC driver class to be specified (Confluence and Bamboo will autoselect this based on the database.type value, see below). The JDBC driver must correspond to the JDBC URL used; see the table above for example driver classes.

Note that the products only ship with certain JDBC drivers installed, depending on the license conditions of those drivers.

Non-bundled DB drivers

MySQL and Oracle database drivers are not shipped with the products due to licensing restrictions. You will need to provide additionalLibraries configuration.

database.type ¶

Jira, Confluence and Bamboo all require this value to be specified, this declares the database engine to be used. The acceptable values for this include:

| Vendor | Jira | Confluence | Bamboo |

|---|---|---|---|

| PostgreSQL | postgres72 | postgresql | postgresql |

| MySQL | mysql57 / mysql8 | mysql | mysql |

| SQL Server | mssql | mssql | mssql |

| Oracle | oracle10g | oracle | oracle12c |

| Aurora PostgreSQL | postgresaurora96 |

database.credentials ¶

All products can have their database connectivity and credentials specified either interactively during first-time setup, or automatically by specifying certain configuration via Kubernetes.

Depending on the product, the database.type, database.url and database.driver chart values can be provided. In addition, the database username and password can be provided via a Kubernetes secret, with the secret name specified with the database.credentials.secretName chart value. When all the required information is provided in this way, the database connectivity configuration screen will be bypassed during product setup.

Namespace ¶

The Helm charts are not opinionated whether they have a Kubernetes namespace to themselves. If you wish, you can run multiple Helm releases of the same product in the same namespace.

Clustering ¶

By default, the Helm charts will not configure the products for Data Center clustering. In order to enable clustering, the enabled property for clustering must be set to true.

Clustering by default for Crowd

Crowd does not offer clustering configuration via Helm Chart. Set crowd.clustering.enabled to true/false in ${CROWD_HOME}/shared/crowd.cfg.xml and rollout restart Crowd StatefulSet after the initial product setup is complete.

jira:

clustering:

enabled: true

confluence:

clustering:

enabled: true

bitbucket:

clustering:

enabled: true

Because of the limitations outlined under Bamboo and clustering the clustering stanza is not available as a configurable property in the Bamboo values.yaml.

Clustering is enabled by default. To disable clustering, set crowd.clustering.enabled to false in ${CROWD_HOME}/shared/crowd.cfg.xml and rollout restart Crowd StatefulSet after the initial product setup is complete.

In addition, the shared-home volume must be correctly configured as a ReadWriteMany (RWX) filesystem (e.g. NFS, AWS EFS, Azure Files, GCP Filestore, or any NFS-compatible storage)

Generating configuration files ¶

The Docker entrypoint scripts generate application configuration on first start; not all of these files are regenerated on subsequent starts. This is deliberate, to avoid race conditions or overwriting manual changes during restarts and upgrades. However, in deployments where configuration is purely specified through the environment (e.g. Kubernetes) this behaviour may be undesirable; this flag forces an update of all generated files.

The affected files are: - Jira: dbconfig.xml - Confluence: confluence.cfg.xml - Bamboo: bamboo.cfg.xml

To force update of the configuration files when pods restart, set <product_name.forceConfigUpdate> to true. You can do it by passing an argument to helm install/update command:

--set jira.forceConfigUpdate=true

values.yaml: jira:

forceConfigUpdate: true

Bitbucket and Bitbucket Mesh configuration file

It's not possible to generate the Bitbucket and Bitbucket Mesh configuration files. Bitbucket uses ${BITBUCKET_HOME}/shared/bitbucket.properties. The properties are from bitbucket.properties file and can be provided as environment variables. For example:

bitbucket:

additionalEnvironmentVariables:

- name: SEACRH_ENABLED

value: false

- name: PLUGIN_SEARCH_CONFIG_BASEURL

value: http://my.opensearch.host

${BITBUCKET_HOME}/mesh.properties. The properties are from mesh.properties file and can be provided as environment variables. For example: bitbucket:

mesh:

additionalEnvironmentVariables:

- name: GIT_PATH_EXECUTABLE

value: /usr/bin/git

To translate property into an environment variable:

- dot

.becomes underscore_ - dash

-becomes underscore_ - Example:

this.new-propertybecomesTHIS_NEW_PROPERTY

Additional config properties ¶

Jira only

This feature is currently available for Jira only. It requires a Jira container image version that supports the ADDITIONAL_JIRA_CONFIG_* environment variables. See the Docker image documentation for full details on the underlying mechanism.

The Helm chart provides dedicated values for injecting properties into jira-config.properties without constructing environment variable names manually:

jira:

additionalConfigProperties:

- "jira.websudo.is.disabled=true"

- "jira.lf.top.bgcolour=#003366"

These are equivalent to setting ADDITIONAL_JIRA_CONFIG_* environment variables directly. The values can also be set via --set:

helm install jira atlassian-data-center/jira \

--set 'jira.additionalConfigProperties[0]=jira.websudo.is.disabled=true'

Injecting secrets ¶

For values that reference Kubernetes Secrets (e.g. passwords), use additionalConfigPropertiesExpandEnv. Placeholders in {VAR_NAME} format are replaced with the corresponding environment variable value at container startup:

jira:

additionalEnvironmentVariables:

- name: MY_SECRET

valueFrom:

secretKeyRef:

name: my-k8s-secret

key: password

additionalConfigPropertiesExpandEnv:

- "some.password={MY_SECRET}"

Alternatively, you can use jira.additionalEnvironmentVariables to pass the ADDITIONAL_JIRA_CONFIG_* environment variables explicitly if you need full control over naming.

Additional libraries & plugins ¶

The products' Docker images contain the default set of bundled libraries and plugins. Additional libraries and plugins can be mounted into the product containers during the Helm install. One such use case for this is mounting JDBC drivers that are not shipped with the products' by default.

To make use of this mechanism, the additional files need to be available as part of a Kubernetes volume. Options here include putting them into the shared-home volume that's required as part of the prerequisites. Alternatively, you can create a custom PersistenVolume for them, as long as it has ReadOnlyMany capability.

Custom volumes for loading libraries

If you're not using the shared-home volume, then you can declare your own custom volume, by following the Additional volumes section above.

You could even store the files as a ConfigMap that gets mounted as a volume, but you're likely to run into file size limitations there.

Assuming that the existing shared-home volume is used for this, then the only configuration required is to specify the additionalLibraries in your values.yaml file, e.g.

jira:

additionalLibraries:

- volumeName: shared-home

subDirectory: mylibs

fileName: lib1.jar

- volumeName: shared-home

subDirectory: mylibs

fileName: lib2.jar

This will mount the lib1.jar and lib2.jar from the mylibs sub-directory from shared-home into the appropriate place in the container.

Similarly, you can use additionalBundledPlugins to load product plugins into the container.

System plugin

Plugins installed via this method will appear as system plugins rather than user plugins. An alternative to this method is to install the plugins via "Manage Apps" in the product system administration UI.

For more details on the above, and how 3rd party libraries can be supplied to a Pod see the example External libraries and plugins

P1 Plugins ¶

While additionalLibraries and additionalBundledPlugins will mount files to /opt/atlassian/<product-name>/lib and /opt/atlassian/<product-name>/<product-name>/WEB-INF/atlassian-bundled-plugins respectively, plugins built on Atlassian Plugin Framework 1 need to be stored in /opt/atlassian/<product-name>/<product-name>/WEB-INF/lib. While there's no dedicated Helm values stanza for P1 plugins, it is fairly easy to persist them (the below example is for Jira deployed in namespace atlassian):

-

In your shared-home, create a directory called

p1-plugins:kubectl exec -ti jira-0 -n atlassian \ -- mkdir -p /var/atlassian/application-data/shared-home/p1-plugins -

Copy a P1 plugin to the newly created directory in shared-home:

kubectl cp hello.jar \ atlassian/jira-0:/var/atlassian/application-data/shared-home/p1-plugins/hello.jar -

Add the following to your custom values:

jira: additionalVolumeMounts: - name: shared-home mountPath: /opt/atlassian/jira/atlassian-jira/WEB-INF/lib/hello.jar subPath: p1-plugins/hello.jar

Run helm upgrade to find /var/atlassian/application-data/shared-home/p1-plugins/hello.jar mounted into /opt/atlassian/jira/atlassian-jira/WEB-INF/lib in all pods of Jira StatefulSet.

You can use the same approach to mount any files from a shared-home volume into any location in the container to persist such files across container restarts.

CPU and memory requests ¶

The Helm charts allow you to specify container-level CPU and memory resource requests and limits e.g.

jira:

resources:

container:

requests:

cpu: "4"

memory: "8G"

By default, the Helm Charts have no container-level resource limits, however there are default requests that are set.

Specifying these values is fine for CPU limits/requests, but for memory resources it is also necessary to configure the JVM's memory limits. By default, the JVM maximum heap size is set to 1 GB, so if you increase (or decrease) the container memory resources as above, you also need to change the JVM's max heap size, otherwise the JVM won't take advantage of the extra available memory (or it'll crash if there isn't enough).

You specify the JVM memory limits like this:

jira:

resources:

jvm:

maxHeap: "8g"

Another difficulty for specifying memory resources is that the JVM requires additional overheads over and above the max heap size, and the container resources need to take account of that. A safe rule-of-thumb would be for the container to request 2x the value of the max heap for the JVM.

This requirement to configure both the container memory and JVM heap will hopefully be removed.

You can read more about resource scaling and resource requests and limits.

Additional containers ¶

The Helm charts allow you to add your own container and initContainer entries to the product pods. Use the additionalContainers and additionalInitContainers stanzas within the values.yaml for this. One use-case for an additional container would be to attach a sidecar container to the product pods.

Additional options ¶

The Helm charts also allow you to specify:

These are standard Kubernetes structures that will be included in the pods.

Startup, Readiness and Liveness Probes ¶

Readiness Probe ¶

By default, a readinessProbe is defined for the main (server) container for all Atlassian DC Helm charts. A readinessProbe makes HTTP calls to the application status endpoint (/status). A pod is not marked as ready until the readinessProbe receives an HTTP response code greater than or equal to 200, and less than 400, indicating success.

If a pod is not in a ready state, there is no endpoint associated with a service, as a result such a pod receives no traffic (if this is the only pod in the StatefulSet, you may see 503 response from the Ingress).

It is possible to disable the readinessProbe (set <product>.readinessProbe.enabled=false) which may make sense if this is the first (cold) start of a DC product in Kubernetes. With a disabled readinessProbe, the pod almost immediately becomes ready after it has been started, and the Ingress URL will take you to a page showing node start process. We strongly recommend enabling the readinessProbe after the application has been fully migrated and setup in Kubernetes.

Depending on the dataset size, resources allocation and application configuration, you may want to adjust the readinessProbe to work best for your particular DC workload:

readinessProbe:

# -- Whether to apply the readinessProbe check to pod.

#

enabled: true

# -- The initial delay (in seconds) for the container readiness probe,

# after which the probe will start running.

#

initialDelaySeconds: 10

# -- How often (in seconds) the container readiness probe will run

#

periodSeconds: 5

# -- Number of seconds after which the probe times out

#

timeoutSeconds: 1

# -- The number of consecutive failures of the container readiness probe

# before the pod fails readiness checks.

#

failureThreshold: 60

Startup and Liveness Probes ¶

startupProbe and livenessProbe

Both startupProbe and livenessProbe are disabled by default. Make sure you go through the Kubernetes documentation before enabling such probes. Misconfiguration can result in unwanted container restarts and failed "cold" starts.

Self Signed Certificates ¶

There are 2 ways to add self-signed certificates to Java truststore: from a single secret or multiple secrets.

- Create a Kubernetes secret containing base64-encoded certificate(s). Here's an example kubectl command to create a secret from 2 local files:

kubectl create secret generic dev-certificates \

--from-file=stg.crt=./stg.crt \

--from-file=dev.crt=./dev.crt -n $namespace

The resulting secret will have the following data:

data:

stg.crt: base64encodedstgcrt

dev.crt: base64encodeddevcrt

You can have as many keys (certificates) in the secret as required. All keys will be mounted as files to /tmp/crt in the container and imported into Java truststore. In the example above, certificates will be mounted as /tmp/crt/stg.crt and /tmp/crt/dev.crt. File extension in the secret keys does not matter as long as the file is a valid certificate.

- Provide the secret name in Helm values (unlike the case with multiple secrets you don't need to provide secret keys):

jira:

additionalCertificates:

secretName: dev-certificates

- Create 2 Kubernetes secrets containing base64-encoded certificate(s). Here's an example kubectl command to create 2 secrets from local files (the first one with 2 certificates/keys and the second one with just one):

kubectl create secret generic dev-certificates \

--from-file=stg.crt=./stg.crt \

--from-file=dev.crt=./dev.crt -n $namespace

kubectl create secret generic root-ca \

--from-file=ca.crt=./ca.crt -n $namespace

You can have as many keys (certificates) in the secrets, however, you will need to list the keys you'd like to get mounted. All keys will be mounted as files to /tmp/crt in the container and imported into Java truststore.

- Provide the list of secrets and their keys in Helm values:

jira:

additionalCertificates:

secretList:

- name: dev-certificates

keys:

- stg.crt

- dev.crt

- name: root-ca

keys:

- ca.crt

<secret-name>-<key>, so files will get mounted as /tmp/crt/dev-certificates-stg.crt, /tmp/crt/dev-certificates-dev.crt and /tmp/crt/root-ca-ca.crt and imported to Java truststore with the same aliases. The product Helm chart will add additional volumeMounts and volumes to the pod(s), as well as an extra init container that will:

- copy the default Java cacerts to a runtime volume shared between the init container and the main container at

/var/ssl - run keytool -import to import all certificates in

/tmp/crtmounted from secret(s) to/var/ssl/cacerts -Djavax.net.ssl.trustStore=/var/ssl/cacertssystem property will be automatically added toJVM_SUPPORT_RECOMMENDED_ARGSenvironment variable.

If necessary, it is possible to override the default keytool -import command:

jira:

additionalCertificates:

secretName: dev-certificates

customCmd: keytool -import ...

Atlassian Support and Analytics ¶

Starting from 1.17.0 Helm chart version, by default, an additional ConfigMap is created and mounted into /opt/atlassian/helm in the containers. This ConfigMap has 2 keys: values.yaml and analytics.json that are picked up by the Atlassian Troubleshooting and Support Tools (ATST) plugin. To disable either of the keys or the entire ConfigMap, set enabled to false in the following Helm values stanza:

atlassianAnalyticsAndSupport:

analytics:

enabled: true

helmValues:

enabled: true

Helm values are mounted to be included to the support.zip. The values file is sanitized both on the Helm chart side (any additionalEnvironmentVariables and additionalJvmArgs that can potentially contain sensitive information are redacted) and ATST plugin that will redact hostnames, URLs, AWS ARNs and sensitive environment variables and JVM flags, if any. If the file is found at /opt/atlassian/helm/values.yaml you will see an option to include it to the support.zip when generating one in admin UI.

Analytics json is a subset of values.yaml and contains selected Helm values that are sent as an analytics event and written to analytics logs, if analytics is enabled in the product. Analytics values are purely informational and contain information on how Helm charts are used.

You can find the complete list of analytics values in _helpers.tpl, <product>.analyticsJson.

Tunnels ¶

Jira and Confluence Helm charts support configuring tunnelling. To enable tunneling, set the following in your Helm values file:

jira:

tunnel:

additionalConnector:

port: 8093

An additional connector will be added to server.xml along with Dsecure.tunnel.upstream.port system property. If necessary, connector configuration can be overridden by setting additionalConnector properties to custom values:

jira:

tunnel:

additionalConnector:

port: 8093

connectionTimeout: "200000"

maxThreads: "100"

minSpareThreads: "20"

enableLookups: "true"

acceptCount: "100"

URIEncoding: "UTF-8"

secure: false